2022 / EMBODIMENT. COGNITION. AI.

| February to September 2022 |

MULTIMODALITY. COGNITION. SOCIETY.

TWO EVENTS > Institute -and- Training School

TWO EVENTS, (1) an Institute; and (2) a Training School will jointly present a consolidated perspective on the theoretical, methodological, and practical understanding of multimodality research and its applications:

- the ``Institute on Multimodality'' emphasises a confluence of perspectives from Minds, Media, and Technology towards enabling an understanding of how multimodality shapes the socio-semiotic interpretation and propagation of interactional and communicative artefacts

- the Training School on ``Representation Mediated Multimodality'' emphasises (multimodal) sensemaking by grounded representation, reasoning, and learning at the interface of language, knowledge representation and reasoning in AI, and visuo-auditory computing.

- Artificial Intelligence & Machine Learning

- Media and Communications

- Cognitive Science

- Semiotics

- Data Science

- Cognitive Vision

- Computational Linguistics

- Computational Models of Narrative

- Speech and Language Technologies

Fully funded participation is possible either by invitation, or for select applicants who respond to the open call for participation for the respective events.

INSTITUTE ON MULTIMODALITY > MINDS. MEDIA. TECHNOLOGY.

@ZiF - Center for Interdisciplinary Research / Bielefeld, Germany - Aug 28 to Sep 6 2022

TRAINING SCHOOL > REPRESENTATION MEDIATED MULTIMODALITY

EU COST ACTION Multi3Generation / @Schloss Etelsen, Germany - Sep 26-30 2022

Further Details > https://codesign-lab.org/multimodality.html

Seattle, United States / Tutorial

Spatial Cognition and Artificial Intelligence:

Methods for In-The-Wild Behavioural Research in Visual Perception

The tutorial on “Spatial Cognition and Artificial Intelligence” addresses the confluence of empirically based behavioural research in the cognitive and psychological sciences with computationally driven analytical methods rooted in artificial intelligence and machine learning. This confluence is addressed in the backdrop of human behavioural research concerned with “in-the-wild” naturalistic embodied multimodal interaction. The tutorial presents:

- an interdisciplinary perspective on conducting evidence-based (possibly large-scale) human behaviour research from the viewpoints of visual perception, environmental psychology, and spatial cognition.

- artificial intelligence methods for the semantic interpretation of embodied multimodal interaction (e.g., rooted in behavioural data), and the (empirically driven) synthesis of interactive embodied cognitive experiences in real-world settings relevant to both everyday life as well to professional creative-technical spatial thinking.

- 3. the relevance and impact of research in cognitive human-factors (e.g., in spatial cognition) for the design and implementation of next-generation human-centred AI technologies.

ETRA 2022 - 14th ACM Symposium on Eye Tracking Research and Applications (ETRA 2022)

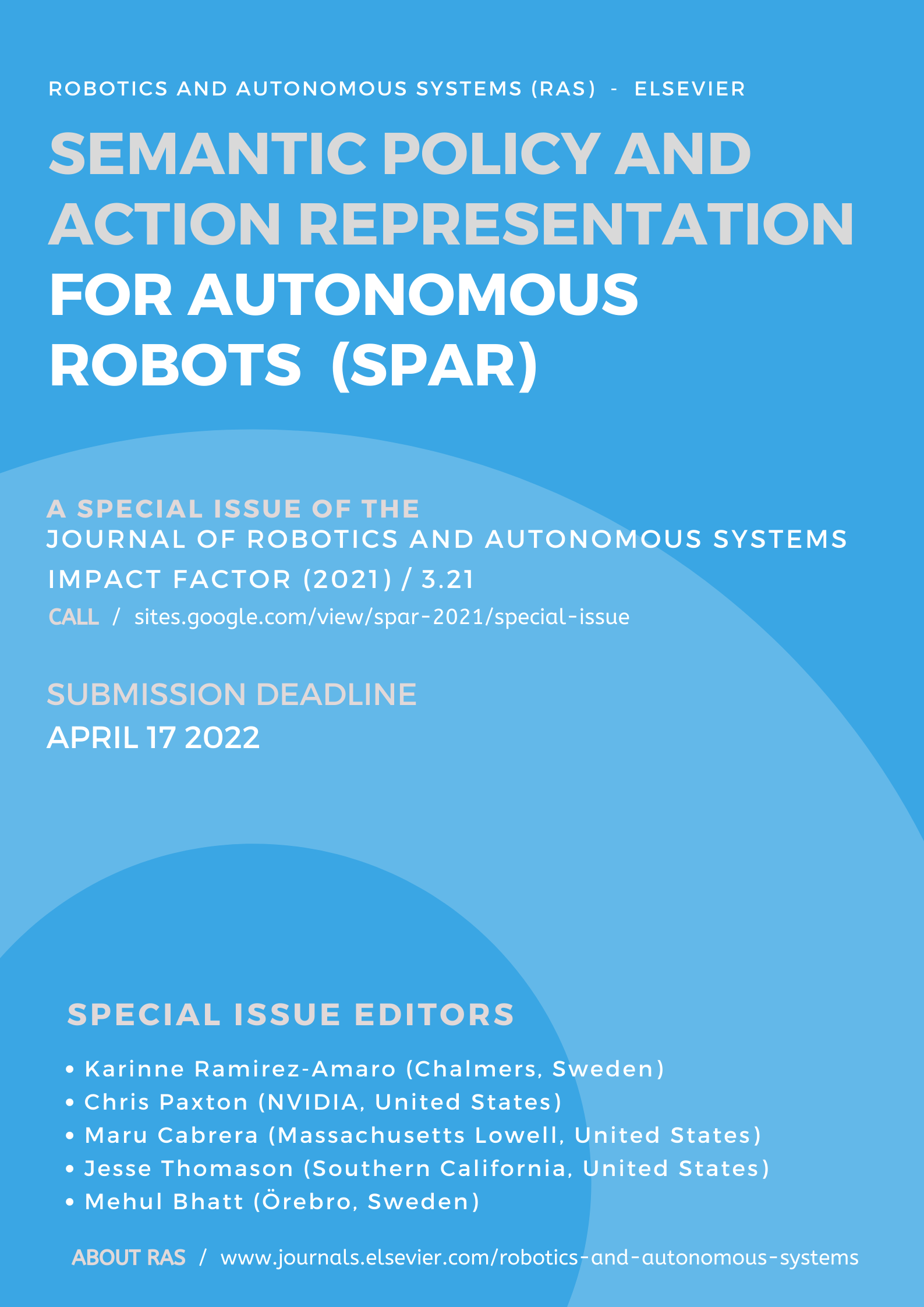

Journal of Robotics and Automation Systems (Elsevier) / Special Issue

Semantic Policy and Action Representations

for Autonomous Robots

The journal of Robotics and Autonomous Systems (RAS) focusses on research on fundamental developments in the field of robotics,

with special emphasis on autonomous systems. An important goal of this journal is to extend the state of the art in both symbolic

and sensory based robot control and learning in the context of autonomous systems.

We solicit original research contributions as part of the upcoming RAS special issue directly addressing the scientific

scope of the SPAR workshop. Please note that submissions to the special

issue remain open to all interested contributors; participation / presentation in the SPAR workshop

is not a prerequisite for submitting a paper for the special issue.

- Task and Motion Planning

- Explainable and Interpretable Robot Decision-Making methods

- Active and Context-based Vision

- Cognitive Vision and Perception - Semantic Representations

- Commonsense reasoning about space and motion (e.g., for policy learning)

- Task-oriented and Perception-informed Language Grounding

- Task and Environment Semantics

- Robot Learning from Demonstration and Exploration

- Paper submissions open (through Elsevier system): Dec 1 2021

- Final paper submission deadline: April 17 2022